A great deal of discussion lately has centered around the idea of a recent “slowdown” in global temperature. With 2016 destined to break the hottest-year-ever record for the third time in a row, it would seem to be over now, even if it was real. I say “if” because scientists disagree about whether or not it ever was even a real thing — and I’m one of those who thinks it was not.

A new paper in Nature Communications investigates the likelihood of a “prolonged slowdown in global warming in the early 21st century.” But like most (if not all) papers that discuss the so-called “slowdown” it does nothing to establish that such a slowdown is real, that it was anything but random fluctuation that looks like a slowdown. Those who do statistics, and do it right, learned one of its most important lessons a long, long time ago: that “looks like” is a very bad way to draw conclusions.

Their central theme is that from 1998 through 2015 global surface temperature rose at a rate of merely “about” 0.1 °C per decade, and that using estimated multi-decadal variability from their simulation model (at the Geophysical Fluid Dynamics Laboratory) there’s a non-trivial possibility this could persist, even until 2030. They use only one computer model, one with particularly high multidecadal variability, but I’m not qualified to comment on that. But the paper starts from more than one mistaken premise. First, that the rate of “global warming” was a mere 0.1 °C per decade, and second, that we need anything new to understand the observed variations in global surface temperature or its estimated rates of increase.

Let’s start with that “0.1 °C per decade” thing. Setting aside the fact that referring (in the paper’s title) to “global warming” quite ignores all the other things besides just surface temperature that have changed during that time span, we can compute the estimated warming rate and its uncertainty (something the authors don’t bother to do) from 1998 through 2015 for the five best-known surface temperature data sets (from NASA, NOAA, the Hadley Centre/Climate Research Unit, Cowtan & Way, and the Berkeley Earth Surface Temperature project):

I find it rather troubling that they consistently refer to the warming rate as “about 0.1” when all five data sets give a central estimate higher than that. It’s true that they all include 0.1 in their (roughly 95%) confidence intervals — but four of the five also include 0.2 (their stated rate for climate models). The fact that 4 out of 5 include both limits is a sign — a sign that the confidence intervals are very wide — and should be taken as a sign that making flat statements about “slowdown,” let alone quoting a numerical figure lower than all five central estimates — is statistically “dodgy” at best.

They also mention that using the time span from 1998 through 2015 enables them to include el Niño peaks at both ends. But the real peak of the recent el Niño isn’t 2015, it’s 2016. And what happens if we estimate trend rates for the time span 1998 through 2016 (using the year-so-far values) rather than through 2015? We get this:

If we bracket the time span with both actual el Niño peaks, then 4 out of 5 data sets give a central estimate which is closer to their standard of 0.2 °C per decade than their standard of 0.1 °C per decade.

Which makes one wonder: what is the likelihood of the purported “slowdown” lasting 15 years longer (through 2030), when it can’t even last one year longer?

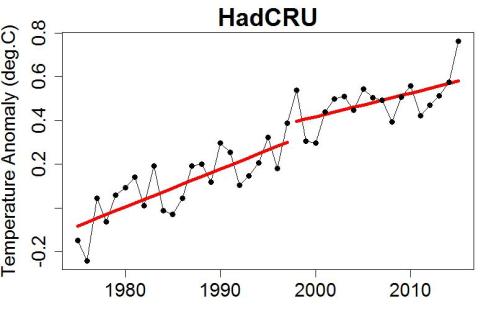

Then there’s the “broken trend” issue. Looking at data from 1975 through 2015, and using the data set with the lowest warming rate 1998-through-2015 (HadCRU), here’s the trend model required to claim a “slowdown” during the 1998-through-2015 period:

This model doesn’t just have a lower central estimate of slope, it has a sudden jump as well, one nearly as big as what they claim for the rate per decade during the latter time span. If you want to “explain” a lower rate of increase (without bothering to demonstrate that it really exists), perhaps you should begin by explaining why global surface temperature started that time span with a jump nearly as big as your claimed decadal rate of increase.

We can fit more realistic models, with a rate change during the period from 1998 onward but which do not include a jump discontinuity. Let’s not omit 2016, since the whole subject is the possibility of a sustained episode of “slowdown” (possibly all the way through 2030). What warming rates would we get for the 1998-onward period? These:

Confidence intervals for all five data sets include 0.2 °C per decade, their stated value of model averages, but all five exclude 0.1, their stated value for “prolongued slowdown.” How can one discuss the possibility of a prolongued slowdown, when the data we have already contradict it?

As for the variability in warming rates (whether statistically meaningful or not), do we really need to invoke multi-decadal variability to explain it? We can take each data set and remove an estimate of variability caused by much more short-term factors, namely el Niño, volcanic eruptions, and solar variations (using a method like that from Foster & Rahmstorf). The data corrected for short-term fluctuation factors look like this:

The dashed lines show their period of claimed “slowdown” — but it not only fails statistical tests, it doesn’t even pass the “looks like” test.

In my opinion, this paper doesn’t pass muster. They discuss a “slowdown” without providing any real evidence of it, they fail to estimate confidence limits for their “slowdown” rate which happens to be lower than the central estimate for all five major global data sets, they ignore the presence of a jump discontinuity in the model required to get such low estimates, they fail to mention that even the variability which does exist is readily explained by short-term factors without having to invoke multi-decadal variability, and their central thesis — that a “slowdown” might persist for another 15 years — is already contradicted by a single additional year. I consider its many, and very serious, flaws to be telling evidence that the whole “slowdown” idea was misguided from the very start.

This blog is made possible by readers like you; join others by donating at Peaseblossom’s Closet.

The ‘jump discontinuity’ problem is due to their cherry picking 1998 as the start point, yes?

[Response: It’s due to the fact that fitting only the data from 1998 through 2015 ignores everything that happened before. But basically yes, it’s size is because of picking 1998 as the starting point.]

They were trying to be fair, going from one high to the next. Note that stopping in a high year would also cause a jump discontinuity the other way. Not the best use of the data, but not so bad, either. That is, if only they had used the right ending year (and the other errors Tamino points out).

OT: I think you find this interesting or maybe that it needs a closer look:

http://science.sciencemag.org/content/early/2016/11/30/science.aah7393

Title: “More tornadoes in the most extreme U.S. tornado outbreaks”

From abstract:

“Extreme meteorological environments associated with severe thunderstorms show consistent upward trends, but the trends do not resemble those currently expected to result from global warming.”

Hi Tamino. You say: “it does nothing to establish that such a slowdown is real, that it was anything but random fluctuation that looks like a slowdown”.

I feel like you and the authors of these slowdown papers are talking past each other. Specifically, many of these authors take the worldview that the slowdown could very well be the result of “random fluctuations” but that this does NOT mean that the slowdown was not “real”. It could have been random fluctuations (that can be explained physically) and “real” at the same time. For example, global mean temperature in 2011 was colder than in 2010. Somebody could absolutely write a paper on why global warming paused/reversed over that 2 year timeframe – there would be a real physical reason for it.

I guess the disagreement stems from different definitions of “global warming”. You are defining global warming as a smoothed underlying ‘signal’ that can be derived statistically, while a lot of these papers are defining “global warming” as the rate of warming over any arbitrarily defined span of time.

Global warming is short for human caused global warming. The rest is called climate variability in climatology.

The study of climate variability is a huge and important field of study that existed before this “hiatus” madness and will exist after it. It even has it own large organization: CliVar. CLIVAR (Climate and Ocean: Variability, Predictability and Change) is one of the four core projects of the World Climate Research Programme (WCRP).

Something slowed down. Something paused. I would say that suggests that global warming slowed down, the process that makes it warmer.

That is how the public interprets it and that is why Nature (Communication) publishes it. If your interpretation was the general view this would have been a study for a normal good specialist journal (and no one outside of science would have heard about it).

“Something causes” a compound pendulum to be at a particular place at a particular distant future time. However predicting that particular place in advance from any particular factors you care to name may well be impossible even in principle.

Studying noise for more detail is a commendable aspect of physics. Imputing meaning to noise that cannot even in principle give up further details may be less commendable. Statisticians, of course, come down a bit differently in this debate than do physicists. Maybe statistics people stop looking for further predictability too early. Maybe. But at some point even physicists have to realize there is no more predictability to be had.

Also internal variability can be predictable. People are working on seasonal and decadal climate prediction. In the mid-latitudes this does not work well yet, but in the tropics it is somewhat decent and helps with investment decisions, insurances, crop selection.

The historian Sam White made the argument that the little ice age was not so disruptive because it was colder, but because the weather was very unpredictable in that period. If we can improve out prediction capabilities that can compensate for some of the uncertainty from bringing the climate system into uncharted waters.

Even without being able to predict, a good understanding of variability is important for adaptation. The height of the dikes is not only determined by the mean sea level or river run off, but also by its variability. This variability will also change and it is thus important to know how much.

True enough. But I think the problem here may be that the internal variability in the present series is such that once the trend is extracted and once perhaps a few other factors as well as in Cowton&Way, the remaining residual variation is pretty darn close to pure noise and further chasing really may be futile without fundamental changes in measurement regimes.

Maybe not. But it’s something to consider.

Re. “something slowed down”, do you really posit this when there is a downturn in thermal noise or radioactive decay for a short period of time? That’s what I’m trying to get at.

If there was a period with less radioactive decay out of chance, I would not call that a slowdown or a pause or a reduction. That is just noise.

If the radioactive source moves away from my detector, that is a real change and when we know that happened or once we have enough data points to show statistically that something has happened (and it was not chance) then you call it a slowdown of the decay rate.

Right now, given present measurement schemes as the input at least, the best one can say statistically is that there is no need whatever to impute any additional cause at all to any recent climate “wiggle” any more than there is a need to impute a cause to wiggles in decay event counts/sec. The wiggles are inside the error bars. Nothing predictable may have “caused” them. Hard as that is to accept, God may very well play dice.

Of course that is from the stats perspective.

There is the open question whether better inputs could “see” smaller scale causes, of course. But there is a limit somewhere out there in that direction as well.

>You are defining global warming as a smoothed underlying ‘signal’ that can be derived statistically, while a lot of these papers are defining “global warming” as the rate of warming over any arbitrarily defined span of time.

Isn’t that weather ? Climate being the ‘trend’ over 30 years ?

PTBrown, even the concept of global mean temperature is subject to definition, and when you think about what it really means, you’ll realize that it has to change very smoothly, but our measurements of it can change more rapidly.

What a measurement like HadCRU is telling us is a spatially weighted average from a bunch of thermometers in the air, close to ground level. But a true global mean temperature would be the mean temperature of everything that people contact – say the top meter of dirt over the whole planet, the top 100 meters of ocean, and maybe the bottom 200m of the atmosphere, all properly weighted by heat capacity. You can see how that could only change very smoothly – basically it can only change by the total radiative imbalance of the planet. It could still vary somewhat, say due to ocean currents moving more or less heat to the poles where it’s more efficiently radiated away, but it’s not going to vary quickly. About the most abrupt thing that can happen to it is a large tropical volcano causing a couple of years of cooling from aerosols.

Getting back to those thermometers hanging in air – they can register changes due to changing balance between the ocean and the atmosphere (such as El Nino) or even just random effects based on where all the individual thermometers are located. Thus we expect the measurements to have both short term movements we understand (like El Nino) and short term movements that are pure noise. But anyone who discusses global average temperature, and puts a jump discontinuity into it, doesn’t fundamentally understand what they’re talking about.

Tamino – how does your El Nino/solar/volcanism smoother to filter out temporary influences on global average surface temperature vs, the underlying GHG-induced trend (as described in your Jan. 27 2016 blog post at https://tamino.wordpress.com/2016/01/29/correcting-for-more-than-just-el-nino/#more-8167) compare the similar filter published by m Foster & Rahmstorf in 2011?

Which of the two “filters” explains more of the interannual variability, leaving just the underlying GHG trend?

[Response: The revised method is only a small modification of the previously published one, and explains slightly more of the interannual variability, but the difference is not much. What they leave behind is not necessarily just the GHG trend, it’s everything not due to el Nino/volcanoes/solar. And of course, neither is a perfect estimate (but I think they’re both pretty good).]

The key is using 1998, the greatest statistical outlier in many decades, as the starting point. For example, 1998-2008 is the only 10 year period in the last quarter century in which the final year is lower than the starting point, so that it “looks” like it’s hardly rising, whereas if you remove both end years, the overall temperature rise becomes obvious. Scientific reason would say not to start any time period with a statistical outlier, yet that is the year climate deniers, for obvious reasons, always start any time period showing a slowdown, or “hiatus” as they more often claim it to be. It is much more normal to start time periods with a rounded year like 2000, 1990, 1995, but no, it has to be 1998, because no other starting year can make it even appear like there’s a slowdown or hiatus. It is clear intentional deception, for which climate deniers, led by fossil fuel industrialists, are notorious, & considering that the consequences of not addressing this imminent threat are existential, it is criminal to the highest degree.

I was struck by the variance over time among satellite-based measurements, as discussed in a recent Tamino post. Here, speaking of problems in science by “looks like” … it looks like there’s a period in the middle or late-middle of the “removing El Nino, Volcanism, Solar” graph that in which the spread across temperature estimation teams/methods is lower than elsewhere in the figure. My first question would be, understanding that there are differences in the area weightings for the Arctic/interpolations/etc, why might that occur? But learning from the main thrust of this post, I’ll instead ask if there are significant trends in the differences among the temperatures estimated by these teams/methods.

I’ve waited all day to see if anybody from Climate Etc. picked up on these sentences from the paper in question:

The synthetic series in Fig. 5a also show examples of greatly accelerated warming lasting a decade or more, which are evidently spring-back effects as an internal variability cooling episode is followed by a strong internal variability warming episode. The strong warming episodes are further amplified by the underlying forced warming trend. One extreme example shows a warming of almost 1 °C in 15 years—a much greater 15-year warming rate than has occurred in the observations to date (red curves). These spring-back warmings illustrate another important potential consequence of strong internal multidecadal variability as simulated in CM3, and reinforce the need to better understand whether such internal variability actually occurs in the real world. …

Because of the AMO crowds’ incessant chorus that natural variability – as represented by the negative phase of the AMO/the stadium wave/low climate sensitivity – is about to bring global cooling, people appear completely unaware that it could instead bring a nasty heatwave… a rapid and taller step up in the GMST than seen in in GMST record to date.

Doesn’t picking 1998 as the start year because it was a hot year invalidate the calculated standard deviations and confidence limits? My understanding is that the calculation assumes that the sample years are randomly picked, not selected on the basis of their temperatures.

Yes.

Excellent. I keep wondering why the climate science community, generally, have bought into the “slowdown” meme, when there was no slowdown that passes statistical muster. This is the second paper over the last year or so which has bought the slowdown story hook line and sinker. There is no need to research why there was a slowdown or how long it could last when there was never actually any slowdown, as you’ve amply demonstrated here several times.

Perhaps the idea of a slowdown in somehow reassuring? That’s the only reason I can think of, unless the continuous repetition by the deniers has paid off in spades.

Have you had any communications with climate scientists who’ve bought into the meme?

You know, the rate of global temperature increase seems so rock solid as to almost be boring. I wonder if there are contrarian economists who devote as much effort into arguing in academic journals that stock market values have “paused”, and might stay “paused” for some time.

I used your charts (with attribution) in my blog for this post: http://volewica.blogspot.com.au/2016/12/02-per-decade.html Hope that’s OK.

For something more about climate change, including economic aspects of it, here is a logical argument for addressing climate change, in case you’re interested:

We often hear claims that global warming isn’t real, or if so, it’s not caused by humans. That human emissions didn’t cause previous polar ice melting doesn’t mean that it isn’t causing the current polar ice melting. Scientists have looked at all possible factors that might cause global warming, both human & natural, and have concluded overwhelmingly that the current global warming is due to human emissions.

Here are a few things to take into account while trying to determine the truth of the matter. First, keep in mind these principles: environmental health is directly correlated with personal health; rather than trying to control nature, which has gotten us the environmental disaster we face today, we need to learn to work with nature; water is far more valuable than oil or gas; trees are far more valuable than coal, or even gold, since we need water & trees simply to survive. If we abide by these truths, then we might have a chance to turn things around and keep this Earth habitable.

Try to look at motivation. It is amply & demonstrably clear that fossil fuel companies (& other huge multinational corporations) have plenty of motive to do & say whatever it takes to make sure that they have the right to extract whatever they want to extract & to poison whatever & whomever in the process, to make the maximum amount of profit possible, and to avoid any taxes or restrictions, no matter the cost to the rest of the population. Their greed has shown no bounds. Just look at Koch, Exxon, Monsanto & Walmart, for example. It is much harder to attribute any comparable motive, though many have tried, to scientists & academics whose lifelong mission has been to discover & explain the truths about the universe & how things work (except where they’re tied with corporations, in which case they may toe the line of the corporatist climate deniers).

Aside from that, if you are faced with these 4 outcomes:

1) the scientific community is right & we take the necessary measures to alleviate climate change: it could be expensive in the short run, but we would safely overcome the challenges with minimal climate & environmental disruption, and the overall financial benefits compared to doing nothing are likely to be very positive in the long run. The money we put up front will have been an excellent investment.

2) The scientific community is wrong but we take those measures, anyway: it would be expensive, but there’d be many other benefits we’d gain: much less pollution, we’d continue to have a diverse flora & fauna, we’d still have most of our resources, we’d be much healthier, we’d likely have a healthier employment situation, a less uneven wealth distribution, etc., & we’d gain new economic opportunity & advantages in the long run through innovation. Again, an excellent investment.

3) The scientific community is wrong & we don’t take those measures: we’d save some money in the short run, but our resources would be greatly depleted & therefore much more expensive; disputes over water & other resources would likely get steadily more frequent & violent, we’d have pollution of all kinds, our oceans would be dying (they already are), there’d be catastrophic extinction of plants & animals (already we’re experiencing one of the greatest mass extinctions in the history of this Earth due mainly to human activities), our overall health would likely decline, etc.

4) The scientific community is right but we do nothing: then even more calamitous extinction; huge displacement of human populations; widespread conflict over our remaining resources, including water; widespread & uncontrollable disease; increasing crop failure; intolerable weather; gigantic destructive storms; possible extinction of the human species.

So what is the best outcome? Actually, it’s #2: scientists are wrong but we take preventive measures – the response coal companies & other climate deniers are telling us not to take! We would’ve put some money upfront, but the side benefits would be well worth it & we wouldn’t’ve had to go through some of the worsening effects of climate change that we feared were impending. #1 would be the 2nd best outcome, because although we go through some tough times weathering through some of the effects of climate change that appear sure to come no matter what we do, we will have survived & overcome our greatest world climate threat we’ve ever faced. Even though it seems to be the industrialists’ dream, #3 comes out a poor 3rd, because although we wouldn’t face the climate challenges (beyond the normal cycles we’ve always faced), we’d still be a much worse place due to widespread pollution, resource depletion, wildlife extinction, etc., which would eventually take us down, anyway, even without being pushed on by climate change. Of course, #4 would be the worst. Conclusion: we must take the (now urgent & dramatic) measures necessary to combat climate change & environmental degradation, whether 97%+ of the scientists are correct, or certain powerful, superwealthy fossil fuel & chemical industries such as Koch, Exxon, Monsanto & Dow are correct. No matter which side is right, taking these measures is clearly the prudent course to take. The longer we wait, the more expensive it will be and the more we will suffer.

Even if these outcomes were considered to have equal possibility (they’re not: the probabilities overwhelmingly favor #1 or #4, depending on our action or inaction), and even if you didn’t care about maintaining animal & plant diversity, a pleasant environment, clean air, clean water, your own personal health & longevity to say nothing about other people’s (because if you were this way, you wouldn’t care about other people), but only about getting rich, any reasonable businessman, in order to insure himself from the worst consequences, which would be so dire that the differences among the other outcomes become trivial, would choose to take appropriate measures to tackle this possible climate change challenge.

Much more prudent would be to take measures to prevent or alleviate these disastrous changes, like becoming much more energy efficient, converting to clean, renewable energy, conserving more, paying for greenhouse emissions, consuming less, reducing intake of meat, avoiding plastic, expanding mass transit, avoiding driving cars, abandoning industrial farming for regenerative organic farming, reforesting our lands, reducing chemical spills & agricultural runoff, reducing the amount of land paved in concrete or asphalt… All these things would help forestall climate change, have many other benefits to the environment, people’s health & the economy, & aren’t hard to do, other than getting past the well-funded industries trying to block such measures. It’s a far better way than suffering through ever greater environmental & economic disasters, wishing & hoping that technology will come up with something to protect us. And it’s our moral imperative to do so.

There’s a Youtube video of a guy making this basic case which is also pretty persuasive, but I like your development of the argument.

Thanks, Doc!

I wish people would talk more about “global heating” (increasing heat in the climate system) and less about “global warming” (increasing surface temperatures).

During the discussions of the so-called hiatus, a question was: Where is the heat going if it is not appearing in increased surface temperatures as predicted? Radiation measurements assured us that the rate of global heating was continuing to increase. Some of the answers were discoveries in 2014 and 2015 of more short-cycle ocean overturning, particularly in the south Atlantic, which moved heat deeper in the oceans faster than had been expected, and much greater melting of ice from the bottoms of ice shelves and sea-terminating glaciers (2015 and 2016).

The total amount of heat in the climate system seems to be an important determinant of the climate regime (e.g., snowball Earth, ice age, or hot house planet regime). Humans have been on Earth only during an ice age climate regime, and human civilization developed only during a nicely stable interglacial period of our ice age. The danger is that human-caused global heating may result in an extremely rapid transition to a hot house planet regime and that most higher life forms will be unable to survive that rapid change.

This is a really excellent comment, bringing up very important points that are too often overlooked.

Indeed. They use the well known technique of “proof by contradictory citation” viz:

and cite “Fyfe, J. C. et al. Making sense of the early-2000s warming slowdown” who say:

i.e. Fyfe et al definitely do NOT assess the post-2000 global warming slowdown as statistically inconsistent with the continued warming in CMIP5 models.

There is something fishy about Knutson et al.

So it turns out that scientists are social animals with human weaknesses just like everyone else, but I’m a bit disappointed that peer review doesn’t weed out problems such as with broken trends and lack of uncertainty estimates.

Yes and the reviewers don’t check that the citations say what the authors claim they say.