Guest post by Stefan Rahmstorf, Grant Foster, and Niamh Cahill

A look at the global surface temperature evolution makes one thing very clear: claims that global warming has “stopped” or “slowed” are not exactly supported by the recent data. Last month was not just the warmest April on record, it also beat the previous April record by the largest margin since the beginning of record-keeping in the year 1880. (April 2016 was 0.24 °C warmer than the previous record April 2010; this margin was three times larger than the previously largest margin of 0.08 °C.) In fact, February was also the warmest February, by the largest margin on record. And January was the warmest January on record. Yes, by the largest margin. The running 12-month average global temperature (Fig. 1) is reaching new unprecedented heights every month.

Figure 1: 12-month running means of global temperature anomaly from NASA’s Goddard Institute for Space Studies.

Nevertheless, claims of a pause or slowdown have not only been made by some US presidential hopefuls. As recently as this February, a paper by Fyfe et al. in Nature Climate Change argued that a significant slowdown of global warming occurred in the early 2000s, specifically during 2001-2014. However, the evidence they present is not based on statistical analysis and makes a common error: the model they apply is unphysical in assuming a “broken trend” with a jump discontinuity at the start of the purported slowdown. Since this error is so common it is worth explaining it here.

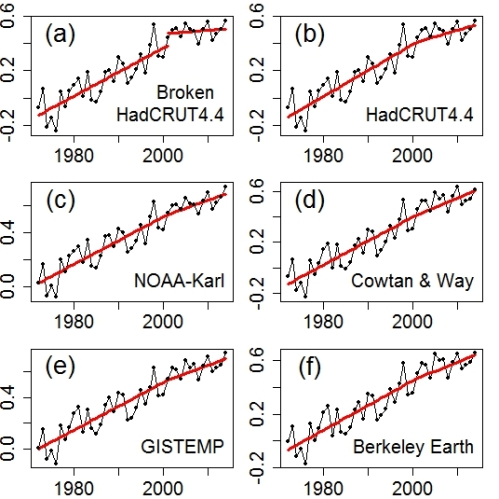

Their trend analyses (including their Fig. 2 showing running trends) all make the unstated assumption that trends are unconnected, i.e. “broken” trends as shown in Fig. 2 (a) below, corresponding to a model where phases of linear warming are interrupted by sudden jumps in temperature. Their claim is thus not merely that warming was slow during 2001-2014, but that the global temperature jumped up in 2001 to start this slow warming period at a warmer level.

An appropriate method to check for trend changes in time series is change-point analysis [2]; in this case that involves modeling the data as a continuous piecewise linear trend. Several studies unfortunately not cited by Fyfe and colleagues) using change-point analysis have found that there has been no significant change in the rate of global warming since ~1970.

Fig. 2 (b)-(f) show global surface temperature time series assuming a trend change at the start of 2001 and computes trends from 1972-2001 and 2001-2014 (as in Fyfe et al.) but without discontinuities, i.e. keeping the trend lines connected. The trend change at 2001 is minimal, particularly considering that 2001-2014 was hand-picked for its low trend. The difference is biggest in the data sets which are not fully global (HadCRUT4.4 and NOAA-Karl) but exclude the data-sparse Arctic, where disproportionate warming has been observed since ~2000 [5].

Figure 2: Piece-wise linear trends from 1972 through 2014, allowing a slope change at 2001, for five global temperature data sets. (a) HadCRUT4.4, treating the periods 1972 through 2001 and 2001 through 2014 as independent (i.e. a “broken” trend). (b) HadCRUT4.4, continuous trend. (c) NOAA-Karl, continuous trend. (d) Data from Cowtan & Way, continuous trend. (e) GISTEMP, continuous trend. (f) Berkeley Earth, continuous trend.

In no case is the trend change statistically significant. We should perhaps mention why this matters and what the lack of significance means. Essentially it means that when taking into account the “noise” in the data (the short-term wiggles), the differences in trend are not robust. For example, just picking slightly different start and end dates will give different results. That is illustrated in Fig. 3, where we added one year at either end to the supposed “slowdown period” claimed by Fyfe and colleagues. How meaningful is it to say that the warming was slower than usual over the period 2001-2014, but faster than usual over the period 2000-2015? Right. Not at all. Both claims are technically correct, but the fact that neither is statistically significant tells us not to attach too much meaning to them, since it’s “all in the noise.” That is exactly what significance tests are for.

Figure 3. Trend rate of annual average global temperature, comparing the results from 2001 through 2014 (in blue) to that using a single additional year before and after, from 2000 to 2015 (in red).

Now some people might say: but the real slowdown was during 2001-2012, not 2014. But that is likewise not statistically significant, and this has been tested and published in the peer-reviewed literature. Here’s how the change point analysis studies mentioned above work: they test trends for all possible combinations of start and end dates and look for points with significant trend changes (these are called change points). The last change point found in global surface temperature data occurred before 1980. Since then no significant trend change has been observed.

We conclude that the early-2000s global warming slowdown is greatly overstated by Fyfe et al. Their central claim that “the surface warming from 2001 to 2014 is significantly smaller than the baseline warming rate” (1972-2001) is falsified by the statistical analysis discussed here.

Appendix

The table below lists the rates estimated for the 2001-2014 time span using a “broken” trend as in Fyfe et al., and the more physical choice of continuous trend, for the data used in F16 and two others: those from Cowtan & Way based on HadCRUT but interpolating data-sparse regions by Kriging [6], and the land+ocean temperature from the Berkeley Earth Surface Temperature project [7].

Warming rates (°C/decade) 2001-2014, and p-values for a trend change in 2001, for both broken trends and continuous trends. Rates for the 1972-2014 period range from 0.162 to 0.178°C/decade.

|

Broken |

Continuous |

|||

|

Data |

Rate |

p–value |

Rate |

p–value |

|

HadCRUT4.4 |

0.025 |

0.06 |

0.094 |

0.10 |

|

NOAA-Karl |

0.079 |

0.32 |

0.117 |

0.26 |

|

GISTEMP |

0.075 |

0.27 |

0.128 |

0.33 |

|

Cowtan & Way |

0.060 |

0.10 |

0.144 |

0.45 |

|

Berkeley |

0.050 |

0.10 |

0.133 |

0.38 |

It also gives the p-values for a trend change having occured at 2001, based conservatively on an F-test (assuming white noise), hence without considering the additional degree of freedom in the choice of when the trend change occurs. Even without this correction the p-values fail to reach statistical significance at 95% confidence; the proper change-point analysis raises the p-values further. Omitting 2014, or both 2013 and 2014, does not change the lack of statistical significance. Note that even using the questionable approach of Fyfe et al. with broken trend lines fails to yield a statistically significant trend change, not even for the data sets that ignore the Arctic.

References

[1]. Fyfe, John C., et al., Nature Climate Change, 6, 224-228 (2016).

[2]. Carlin, B P., Gelfand, A E. and Smith, A. F. M., Journal of the Royal Statistical Society. Series C (Applied Statistics) 41(2), 389–405 (1992)

[3]. Cahill, N., S. Rahmstorf, and A.C. Parnell, Environ. Res. Lett., 10, pp. 084002 (2015)

[4]. Foster, Grant, and John Abraham, US CLIVAR 13(3), 6-9 (2015)

[5]. Dobricic, Srdjan, Elisabetta Vignati, and Simone Russo, Journal of Climate (ahead of print), doi:10.1175/JCLI-D-15-0417.1 (2016)

[6]. Cowtan, Kevin, and Robert G. Way, Q. J. R. Meteorol. Soc., 140, 1935-1944 (2014)

[7]. Rhode, Robert, Richard A. Muller, et al., Geoinfor. Geostat: An Overview 1:1 doi:10.4172/gigs.1000101 (2013)

[8]. Cowtan, Kevin, et al., Geophys. Res. Lett. 42, 6526-6534 (2015)

[9]. Schmidt, Gavin A., Drew T. Shindell, and Kostas Tsigaridis, Nature Geoscience, 7, 158-160 (2014)

[10]. Mann, Michael E., Stefan Rahmstorf, Byron A. Steinman, Martin Tingley, and Sonya K. Miller, Scientific Reports, 6, Article number: 19831 (2016), doi:10.1038/srep19831

P.S.

P.S. Here is an additional figure, which is the same as Fig. 2 above but computes a seperate trend for 2000-2015 rather than for 2001-2014. As you can see, there is no more sign of any slowdown except in HadCRUT4, and for the data from Cowtan & Way there is now a slight (not statistically significant) increase in slope. This illustrates what the lack of statistical significance signals: the “slowdown” is not a robust feature, as just adding one year at either end of the slowdown period highlighted by Fyfe et al. makes it disappear completely. (This was the originally intended Fig. 3, which due to a misunderstanding was missing at first as several of our readers noticed.)

Why not mention that discontinuities in temperature trends are wholy unphysical??

“That is illustrated in Fig. 3” There’s no figure 3 shown; it must have been left out accidentally.

[Response: To quote Rick Perry, “Oops.”]

Dear Authors,

I would just like to a give my two cents here. In most if not all of the papers, why is so much emphasis on surface warming? Global warming is all about the globe including ocean warming.

Giving so much attention to surface temperatures ignores more than 90 percent of the heat going into ocean. If statistical analysis is done only on surface warming, then the results/findings whether or not statistically significant may not give entire picture. Surface temperature may be just noise in real signal i.e. ocean warming.

Thanks,

Sourabh

[Response: Of course ocean warming is more physically important. But there are two reasons surface warming gets so much attention: first, it’s measured better over the last century+; second, it’s where we live.]

Fyfe et al agree about the lack of statistical significance of any slowdown:

but they make the mistake of assuming that the baseline that the slowdown is compared against is the period 1950 to 1999:

The fact is that the slowdown is still not statistically significant when compared with a baseline starting at the beginning of a faster warming period such as 1974 to 1999. It is a pity that Fyfe et al did not consider this when making the above claim.

Is it really true that a step change in global surface temperature anomaly would be “unphysical”?

What would be the physical explanation for both a change in intercept and a change in slope? Changes in slope could be caused by step changes in forcings, which are physically possible. But a change in intercept would have to be a step change in energy in the system.

Simultaneous spontaneous radioactive decay of a well-distributed fraction of a mass could instantaneously raise its temperature, but that is “unphysically” unlikely.

But the actual global average surface temperature can and does change by large amounts from one month to the next. When temperature anomaly increased by 0.5 C in a single month, that’s not “noise” in the sense of measurement issues, it really happens. Yes, it’s weather, not climate. But the energy in the system does increase dramatically over a period of a few days. What physics says that having increased by 0.5 C from one month to the next, it has to then go back down again?

Consider examples of heat transfer that are familiar, like pots boiling and ice melting (or, for that matter, dinner cooling). Then consider global heat content.

What could conceivably drive such enormous ‘instantaneous’ heat fluxes?

You may have an answer. I sure don’t, though.

Nigel, surface temps *might* swing 0.5C, though a quick glance at the last few years of Gistemp data shows that anything over 0.3 C is pretty unusual, and most month-to-month swings are 0.2 C or less. However, that’s still representing a small fraction of the heat in the system.

out-of-order reply: The trend line represents climate, not weather. It sounds like you are arguing for a model for climate that isn’t at least piecewise continuous.

Nigel I don’t think the energy in the system does go up and down like that, – it would be a transfer of energy within the system, with that between ocean and atmosphere such as associated with ENSO being an obvious place to look. I don’t know if it’s been explicitly studied but I strongly suspect a correlation between ocean surface temperatures and land surface temperatures would be there – and it would have a physical basis. The air near the surface may be what we experience – and it’s what the longest temperature records record – but it’s not nearly the whole climate system.

Well-stated, IMO.

Thanks for all the replies. I guess I originally interpreted the assertion that a step change is unphysical too simplistically.

But I would continue to suggest that it isn’t trivially obvious (and certainly not axiomatic) that a step change in the energy content of the surface layer would be unphysical. Sure, a step change in total ocean heat content would be unphysical, but we’re talking about surface temperatures. would it be obviously impossible, for example, for the climate to enter an El Nino and never snap back out of it?

As for the possibility that “climate” isn’t at least piecewise continuous, that surely depends on what “climate” is. All the definitions of climate that I can find suggest that it is the long-term behavior of weather. For temperature, it’s often defined as a 30-year average, and Meteorological services tend to update these averages once a decade. So by that definition, the “climate” element of temperature series is very definitely discontinuous, and in fact consists entirely of step functions.

I hope I’m not coming across all troll-ish here. There may be a little devil’s advocacy going on, as I intuitively believe that step changes in global surface temperature anomaly would seem unlikely. I also think that all the step-change nonsense you see at places like WUWT is utter tosh. But equally, I have this niggling thought that perhaps the possibility of step changes can’t be trivially – almost axiomatically – dismissed in the way that I’ve seen a few times on Tamino’s (most excellent) blog. And also that the “signal” vs “noise” distinction is non-trivial when the “noise” is weather (a real measured characteristic of the earth system, not measurement errors or extraneous interference from outside the system) and the “signal” is something called “climate” which isn’t really well defined.

It’s one thing to take a recording of Beethoven’s 5th symphony and try to distinguish the “signal” (noises made by musical instruments) from the “noise” (tape hiss, people coughing in the audience, etc.). But sometimes, analysis of temperature records seems to me like taking an already high-fidelity, low-noise recording of Beethoven’s 5th symphony and trying to use statistical analysis to find the “essence” of the music.

Nigel Harris,

One approach to your question I think not addressed here is that we are working with anomalies of average temperatures when we discuss these monthly variations of tenths of a degree. We may well dismiss these variations as ‘noise’ although such variations do involve very large amount of energy when such a change is of tenths of degrees Celsius and an average across the whole globe. Those big energy values need some further understanding.

Note the anomalies we use are there to eliminate the annual cycles from our calculations. The monthly change in temperature outside the tropics (and even inside the tropics) is usually far greater than tenths of a degree per month during Spring & Autumn. And those average temperatures are calculated using daily max/min temperatures. When the diurnal cycle is considered, we are talking of a change in just a few hours that is ten, twenty, thirty times bigger than the biggest global monthly anomaly variations.

I feel that consideration of these very large energy fluxes in operation throughout the global climate system makes more acceptable the idea that certain configurations of weather could raise the global average by one or two tenths of a degree Celsius for a couple of months and do it in effectively a random manner. Beyond a couple of months, the fluctuation is unsustainable as physics of the global energy balance rules.

According to Fyfe et al.

1972 to 2001 = 0.169 C/dec “planet warmed”.

2000 to 2014 = “slowdown”.

1972 to 2014 = 0.172 C/dec (…?)

Is it me, or is figure 3 missing?

Reblogged this on Don't look now.

This is a very timely post. In my graph-making, I was wanting to put something together to show the progress of this El Nino & future global temperatures w,r,t, their impact the long-term warming trend. With all the guff about “hiatus” now dead and buried, this seemed timely & worthwhile.

But that left me struggling with the Fyfe et al-type short 15-year trends and whether they were valid or not. I had sort of concluded that they probably weren’t but didn’t feel at all confident with my assessment. So I was still toying with their analysis. (I’ve added the latest data to their Fig 2 here (usually 2 clicks to ‘download your attachment’). The trends became 16-year rather than 15-year which does make quite a difference to the detail of the traces.) I eventually plumped for using HadCRUT4 & 12-month/120-month rolling averages. This has the merits of simplicity & understandability and fits with other graphs on the webpage. And it also shows no significant “slowdown” or “hiatus”. Reading this post allowed me to now upload my creation with confidence (here (2 clicks).

Again an interesting post on analyzing change points.

There is no Figure 3, which is mentioned in the text. I assume this to be a mistake.

I am surprised that Fyfe was published, I presume that this is a reply. It is too bad that you have to waste your time on rebutalls like this, Fyfe should never have been published. Why didn’t the reviewers ask Fyfe to compare to change point analysis that were already in print?

It appears to me that your figure 3 is missing from this post.

I’m not seeing that figure.

It was covered up by some arctic sea ice, but global warming fixed the problem.

Figure 3?

Nice. This makes me want to run the same series through my recent mcmc breakfit code to estimate the difference in trends and the confidence interval of that difference. https://github.com/grinsted/gwmcmc/blob/master/html/ex_breakfit.md

The paper is paywalled of course but tidbits reach the intertubes, and from those, I’m not understanding how the Fyfe et al argument advances the science at all.

Aren’t they just describing the escalator?

And then comparing it to forecasts running hot when you guess forcings wrong, whereas hindcasts run dead on when you feed in the actual forcings?

Fyfe et al (2016) appears to be accessible down this link.

Dear Authors,

I am disappointed in your answer to Sourabh, above. When the Idiot @ WUWT says, “No, it is getting colder here.” You said, “It is global warming, and one must consider the temperature on a global basis.” And, I repeat your very true statement – “One must consider the temperature on a global basis!”

We need to consider the total amount of heat gained in the biosphere, where we live. We now know that plankton can be moved around the world in a decade. If the plankton is moving, farther and faster than we thought, then so is heat being advected farther, and faster then we thought. Most of the CO2 in the system is in the oceans. Most of the CH4 cycle is in the oceans. Most of the biomass is . . . where? The oceans are a big part of the biosphere where we live. Not counting the oceans is like looking at the AC thermostat on your office wall and saying, “See! 72 F, there is no heat wave!”

Moulins on Greenland mean that large volumes of ice is warmed to its melting point. Over the last few years a lot of heat has gone to warming, and even melting ice. That heat is in our biosphere. It needs to be accounted.

Science is about telling the truth. The truth is: that by not including some large stores and flows of heat, climate science can pull the blankets over our head, and pretend the heat monster is not that close. The truth is that the heat monster is very close, and can easily penetrate blankets.

We do not need to worry about the trends or the global average. We need to worry about the local day by day weather – with all of its noise and cycles added in. Weather, is one big thermodynamic engine. And, like a truck engine, when an important part breaks, the whole engine breaks. Anytime, anywhere that the total local heat gets to 35C, people break. Anytime (for a few days), anywhere that the total local heat gets to 35C our important crops break. A 30-year climate average, or even an annual average that is “livable” does not matter if occasional “exceptional” heat events are killing large numbers of people and crops.

The Russian heat wave of 2010 was a “Black Swan” that tells us that such heat waves are possible, not just in the tropics, but in – Russia! Remember that last year there was significant ice melt along the north shore of Greenland. These events were messages from Mother Nature, that Climate Science is reticent, rather than frank.

Every climate temperature graph should include a line representing maximum plausible heat allowing for total heat in the biosphere and the whole alphabet soup of weather cycles and effects. And, every climate temperature graph should include the Black Swan(s) that we must avoid at any cost.

Your Loyal Alarmist

We need to worry about the local day by day weather – with all of its noise and cycles added in.

Yes indeed. But we do also need to worry about the trend, which way it is going, and how quickly.

> a step change in energy in the system.

> Simultaneous spontaneous radioactive decay

> of a well-distributed fraction of a mass ….

Yeah, that’s the explanation we’ve been looking for. A few — globally sparse and widely distributed –atoms of antimatter slipping in from that alternate universe nearby, immediately converting that mass and an equal mass of our universe into heat, would explain that step up.

With just an occasional popping, snapping, background noise or random tiny bright flash from time to time.

Likely from that nearby universe in which the auditors are kindly soft-spoken gentlemen who will always take you in.

Ooh! Antimatter is even better than than my synchronistic-episodic radioactive decay theory! Too bad that these step-change models are not observed to be significantly better than models based on traditional conservation of energy.

I agree with most of this post but have a defence of the likes of Fyfe et al.

The observed warming was at the lower end of model expectations and that was well worth investigating. Temperature records have been improved, we understand how to better compare them with models and we’ve learned more about stratospheric water vapour, solar activity and background volcanism.

The most important research seems related to the oceans, particularly the PDO, trade wind strengthening etc. Something really happened there and some great papers have come out of that.

It’s easy to miss how this fits together though. Stefan Rahmstorf et al. are doing a model of joined up linear trends + noise and find no evidence for a slowdown statistically speaking. The people who tend to use “hiatus” in paper titles tend to be looking at something like forced signal + some internal variability + noise. Their “some internal variability” has been extreme over this period and has tried to cool the surface, e.g. England et al. found “unprecedented” trade wind strength recently and that this acted specifically to try and cool Earth’s surface.

Both “sides” seem to be talking past each other a little. Sometimes one “side” brings up a weaker argument which gives the other “side” the chance to pull the discussion in that direction without noticing that IMO the evidence actually fits together quite nicely in a single story that draws a bit on evidence from both “sides”.

Unprecendented step changes or discontinuities in the forcings are not unphysical. However modeling their effects on the system as a step-change in internal energy, as the piecewise-discontinuous models imply, is unphysical and misleading. Modeling a step-change in forcing as a step-change in the intercept and a step-change in slope will underestimate the rate-change effect by hiding a bunch of the effect in the intercept-change term.

I believe that the 2016 global temperature anomaly in the NASA data set will end up at 0.94C, relative to the 1951-80 mean. NASA has Jan-Apr. at 1.21C, so the coming La Nina will help cool off the 2nd half of the year, relatively speaking of course (the 2nd half will still be unusually warm). I’ve been playing around with the data in an Excel spreadsheet and wanted to know if anybody thinks that is a reasonable prediction? If so, it’ll be three years in a row of a record high global temperature.

NASA GISS data:

http://data.giss.nasa.gov/gistemp/tabledata_v3/GLB.Ts+dSST.txt

There are a lot of people who think 2016 will end up above 1.0 ℃, so by comparison your prediction is conservative.

If each remaining month of the year were 0.07 C cooler than the month that immediately precedes it then the anomaly for the year would be about 0.93 C, and the December anomaly would be 0.55 C.

“If each remaining month of the year were 0.07 C cooler than the month that immediately precedes it”

That is of course, completely hypothetical. The word “If” provides the clue.

If a Nino/Nina transition this year has the same timing and magnitude as 1998 (which was the quickest turnaround – 2 months) the result would be about 1.15C for 2016.

Worked out by averaging GISS Jan-Apr (0.685) and May-Dec 1998 (0.606), and assuming the same difference for this year.

However, ENSO is but one factor influencing, and while >1C for GISS 2016 seems more likely than not, I wouldn’t bet on it.

so the coming La Nina will help cool off the 2nd half of the year

A la Nina is likely but not inevitable. The super el Nino of 1998 was followed by la Nina in the latter part of that year, but it was more than a year before la Nina followed the super el Nino of 1983.

A lot of people are taking it as a given this year. The skeptics are doing so for obvious reasons, but I don’t know why anyone else would.

I think it s quite low. Than the reminder of the year would need to be far below 1.0°C.

I did I linear regression of GISS and MEI data against the final year temperature and got 1.07+-0.08°C.

https://moyhu.blogspot.de/2016/02/prospects-for-2016.html?showComment=1463556840184#c4575014473863959451

I tried also predict MEI in order to see, if it will a La Nina or not, but the skill is poor up to now, predicting no La Nina for the end of 2016. So if actually a La Nina develops the temperature may be lower, but I doubt it will below 1.0°C.

I improved my prediction to 1.04+-0.06°C, see the link above.

Uli, another (slightly) interesting question is whether 2016 in the satellite record will be warmer than 1998, the current record. Averaged monthly anomaly from May through December for UAH6.5 Beta (skeptics’ preferred) would have to be at least 0.38C to do that.

http://vortex.nsstc.uah.edu/data/msu/v6.0beta/tlt/tltglhmam_6.0beta5.txt

1998 is also, neatly, the warmest 12-month average in the UAH time series. To beat that record, the average anomaly from May-Sept this year would have to be >0.36C (12-month av Oct 2015 – Sep 16).

Haven’t bothered figuring it out for RSS, which the skeptics will probably denounce later in the year when TLT version 3.4 is released.

The PDO is very different so far this year versus 1998.

@barry: I don’t think that the satellite record is nearly as interesting because it contains beside of temperature data systematic errors of various sources of the same magnitude as the anomaly (at least (old) RSS, UAH may not better). But if I do a linear regression for UAH like for the GISS, I get 2016 by 0.14+-0.10°C warmer than 1998.

A numeric prediction for the future does not really matter. In the short term there is too much noise to make valid predictions, and in the longer term, there is not really enough data.

What matters is that there is a deadly heat wave in India right now, and there have been deadly heat waves somewhere, every year, for the last 5 years. The ‘Black Swan’ was the European Heat Wave of 2003 when 20,000 people died — in a “cool, hiatus year”! it matters that Canada has burned 3/4 million hectares of forest this year, and it is not even June yet. It matters that Houston has had multiple flood events in the last year.

The point is that we are already outside of the temperature ranges that civilizations, engineers, planners, and policy makers expect, and it is going to get hotter. Limits to Growth warned us in 1972. In 1986, the Saudis told us that burning all the oil would be an environmental catastrophe. And yet, the US Sec. of Interior is still saying that going off fossil fuel abruptly is a naive approach.

Letting AGW eat our civilization, is a more naive, and cowardly approach. IPCC WR1 (Watson, et al.,1992) missed important carbon feedback loops in the oceans (e.g.,stores, biology, and flows of CH4), and those omissions have propagated forward in all IPCC work. Thus, actual warming will be larger and happen sooner than the IPCC forecasts. However, Jewell still believes in the IPCC, and has not yet recognized reality. She needs to look out her window and do the math.

Statistics is about getting the correct answer. Sometimes the question is binary: ( Go to war; or, not). Did Roosevelt and Churchill have more and better data for and about going to war against the Natzis then we have today for going to war against AGW? Did they have better data analysis? AGW has invaded many countries, and killed more people than died in the September 1939 invasion of Poland that triggered Great Britain’s entry into the War. AGW has killed more people than the Attack on Pearl Harbor that pushed the US into the war. Consider the number deaths in heat waves. Consider damages (http://www.reuters.com/article/us-disaster-insurance-idUSKBN0UI0XI20160104 ) If aliens invaded Europe and killed 20,000, would we go to war against those aliens? Why did we not go to war against AGW as a result of the 2003 heat wave? In terms of casualties, the 2003 European Heat Wave was 6-times worse than 9/11!

It is time to remember that the folks at Bletchley Park and Las Alamos did brilliant statistics. They came out of Oxford, Cambridge, Princeton, and MIT, and got involved with the war effort. History is written by the winners. If we win the war against AGW, those that helped will be remembered, and glorified. Against AGW, we do not have to worry about disclosing sources and methods. We can act on every scrap of intelligence that we have. That is the point, we should act.

If we lose the war against AGW, there will be no glory for anyone. Mother Nature will just forget about us.

As if often the case, your summary is effective and cogent. Thanks.

Yes, well said. My rough and ready guesstimate is that we have suffered at least a hundred thousand premature deaths and a hundred billion dollars in economic losses so far to AGW.

And, of course, the clock keeps running.

You should publish this in Nature Climate Change.

MarkR,

“I agree with most of this post but have a defence of the likes of Fyfe et al…. The observed warming was at the lower end of model expectations”

By the time the most recent Fyfe paper was published, this was no longer true, though.

Addressing coverage bias and the blended tos/tas issue alone mooted that. This is before considering the Schmidt et al. 2014, Huber and Knutti 2014, Meehl et al., Kosaka and Shie 2013 work showing properly accounting for tropical pacific variability plays such a big role.

Also, no one seems to remember we had the CMIP3 runs to look at this whole time.

“Both ‘sides’ seem to be talking past each other a little.”

I don’t think this is quite true. One side is clearly demonstrating that there was no “pause” or “hiatus” or “slowdown” in terms of a statistically detectable change in the rate of warming. The other side has used language that originally meant those things to talk about a different issue (the model-obs “divergence”).

This is like saying two groups are talking past each other when one group is talking about ketchup as a tomato-based condiment and another group insists on using the word ketchup to describe what everyone else calls mustard. It’s not a matter of talking past each other as the second group using incorrect terminology.

“By the time the most recent Fyfe paper was published, this was no longer true, though.”

Indeed the Fyfe paper is an analysis of history. And it says:

New understanding notwithstanding, analysis of the now-finished slowdown is pretty much just moving deckchairs.

Hank, maintaining a step in temperatures requires a constant power boost, not energy. So either the other universe sprung a persistent antimatter leak or there was some big invisible hysteresis in our climate.

That’s an interesting observation. But doesn’t that apply to an entire closed system, rather than to one bit of it, like the surface layer of the atmosphere?

As Earth warms, the surface glows more brightly and it sheds heat faster (e.g. the Stefan-Boltzmann law). You wanna keep warm, you need a constant fuel source.

Anything that loses heat faster when it warms shows this effect. So it’s true of a layer of the atmosphere just as much as it is for all of Earth.

This paper

http://onlinelibrary.wiley.com/doi/10.1002/jame.20049/full

estimates a thermal relaxation time of 3-30 months depending on how much of the heat capacity of the oceans is included.

A change in the rate of warming should surely be accompanied by a change in a physical cause of warming. If you want to claim the former you really should provide some evidence of a change in the latter. Even a statistically significant change point could be due to noise unless a cause can be identified. The less statistical significance there is then it seems to me to be even more important to provide causal evidence. Further more with your physical cause established you should show over what time scale it will take that change to show up and where it might be most prominent.

It makes me wonder just how big and persistent a change must be in the drivers of surface temperature to show up as a change in the observed rate of temperature change?. If the change is not big or persistent enough then I would expect it to be lost in the noise in the temperature and/or also in the fluctuations present in underlying causes.

Although still not noticeable by the human senses the global measurements definitely indicate an increase in temperatures by 1 degree in the past 30 years, and the increase in speeding up so it’s not a constant. This means there is progress towards the increments of temperatures. Our company collaborates with global markets to facilitate these analytic measurements [link redacted] and follow the movement of climate change all around the globe. We have organized projects that are ongoing and set to offset our carbon emissions but a significant percentage in the global market. Hopefully people will understand the significance of this problem and how it affects our future.

[Response: The trend of global (land+ocean) temperature has not risen by a full degree C in the last 30 years. If you meant deg.F you should have said so — it’s not the scientific standard. If you meant the difference from lowest low to highest high — including the random fluctuations — you’ve committed the same error deniers do to try to minimize global warming.

And over the last 40 years, there’s not yet significant evidence of “the increase in speeding up.” How you justify that claim, I can’t imagine.

Which makes your comment look to me like spam for a company which *might* have a worthwhile goal, but doesn’t have much command of the facts regarding climate change.]

“A look at the global surface temperature evolution makes one thing very clear: [C]laims that global warming has ‘stopped’ or ‘slowed’ are not exactly supported by the recent data.”

I don’t mean to be an ass, but please consider altering “very clear” or strengthening “not exactly supported” in future versions.

Fyfe et al comment on Climate Lab Book (http://www.climate-lab-book.ac.uk/2016/slowdown-discussion/):

“The bottom line is that global surface temperature experienced a warming slowdown over the early-2000s, and this slowdown was at odds with our expectation from most climate model simulations. Whether the slowdown was or was not statistically significant is, at least in our opinion, of relatively minor importance. The key point is that a measurable slowdown occurred,…”

Now I am just a retired stats sort of guy, but that statement would certainly never have flown in my area. How the heck can you have a measurable slowdown that isn’t discriminable from noise? Do I need to study more physics?

[Response: A statement like “Whether the slowdown was or was not statistically significant is … of relatively minor importance” makes me think they need more appreciation of statistics. Especially since the term “slowdown” is so loaded.]

The post at Climate-Lab-Book is actually presented as a reply to this post, saying:-

Fyfe et al (or more precisely Ed Hawkins on behalf of Fyfe et al.) agree to statistical analysis being “a vital tool” but that statistical significance should be seen in the context of “broader physical understanding.” They point to a slowdown without statistical significance being ever-present over the period and there being “considerable physical understanding of the drivers of this slowdown” and an absence of ‘slowdown’ in models, all of which leads to them over-ruling the absence of statistical significance.

In support of this (1, 2 & 3 below), I feel the argued case is inadequate (in being less-than-well-made rather than flat wrong). For me, this all rather buries itself in definitions of ‘slowdown’ rather than disputing what constitutes statistical-significance.

(1) They say of statistical significance that it is dependent on data, period etc & that they “pointed out many of these issues in our Commentary” (although Fyfe et al (2016) only does so by referencing Santer et al (2014) ‘Volcanic Contribution to Decadal Changes in Tropospheric Temperature’ (PDF) [which doesn’t cut the mustard for me].

(2) On the strength of (1), this post is dismissed as being “one particular choice of analysis.”.

(3) An analysis using model data from normal runs & from runs with SST set to recent ENSO data is used to show the impact of ENSO & how 20% of the ENSO runs do yield a statistically-significant change in trend.

Got time to look at this?

http://www.csmonitor.com/Science/2016/0524/Bad-science-Former-Microsoft-exec-accuses-NASA-of-statistical-errors

it’s about asteroid counts, not climate, but might be generally worth a look

Or a read from Phil Plait over at Slate:

http://www.slate.com/blogs/bad_astronomy/2016/05/27/nathan_myhrvold_claims_nasa_scientists_asteroid_calculations_are_all_wrong.html

Just shows you how people that are very competent in their own field can go off the planet when they venture outside of it. I especially liked this howler:

NASA scientists forgot about Kirchhoff’s law? Riiiiiiight.

Well, when you say it like *that*… ;-)

Cool what Myhrvold is trying to do here. Smart move going straight to ARXIV. The main goal is to get ideas across and you can sort it out later. Having the paper available could spawn lots of school projects. The negative is that he may have gotten some details wrong — who exactly does that hurt?

Perhaps the researchers who are being slandered will not like it so much. We will have to see if this paper stands the test of time. It appears that the more experienced researchers do not think highly of his work yet.

Yes, “details” like the conclusions.

Anyone who is mislead by the false conclusions I guess.

Have any of you slogged through Myhrvold’s manuscript yet? Probably not. I have. And from the perspective of one who developed and used some of the original asteroid thermal algorithms and other techniques to determine diameters and albedos of asteroids in the 1970 and 80s, Myhrvold is correct that the NEOWISE algorithm has not used Kicrchoff’s law correctly in all cases. In particular is some loose trading of spectral emissivity and bolometric emissivity in their models. Myhrvold is correct that the NEOWISE mixing of spectral and bolometric emissivity is not good, especially when trying to separate those portions of reflected sunlight from thermal emission in bands 1 and 2. I agree with Myhrvold that the statistical analysis and model calibrations have some very odd aspects. My first impressions (read) lead me to believe the scientists involved were “sloppy” in their algorithm development, but believed the work to be “good enough” (but Myrvhold does not think so). How might this happen? I suspect that the NEOWISE PI (who I do not know personally) may have “grown up” accepting the previous models as a basis to work from, accepted their underlying assumptions without question and has been building on the old approximation algorithms, which were “good enough” back in the day when we had no idea there were “black” asteroids (pv ~ 0.04) and “white” asteroids (pv ~ 0.4). My job now is to ask my colleagues for the inside information to find out what and how much egg is on whose face.

When I read Myhrvold’s manuscript I began to reflect on climate models, too. I came to the rash conclusion that there are too many competing models for too much concern. (What? Me Worry?). From time to time, it is very, very, very worthwhile (to borrow from current methods of emphasis) to go back and question the basic assumptions of a model from an outsider’s skeptical point of view. I did find Myhrvold’s style and tone to be a bit aggressive, but not so much as to be annoying. But then, I have no skin in the game whereas the NEOWISE PI does.

[Response: My opinion: you are so far off base it isn’t even funny. The real test of models (including Myrhvold’s) is how well they agree with cases of known diameter (for which there are many asteroids). The NASA/JPL method works well, Myrhvold’s falls flat on its ass. But worst, by far, is his (very) thinly veiled implications of fraud on the part of NASA scientists.

Also my opinion: he’s a classic case of hyper-arrogant Dunning-Kruger.]

Plait said the biggest issue is that Myrhvold never got his manuscript peer-reviewed. Now guess what? Everyone is reading it and providing comments. Brilliant in that its probably getting a better review than it would through the normal scholarly channels.

These days things are not as they once were. I am shopping around my paper on ENSO and QBO modeling and use arXiv to store the pre-print. Journals such as Physical Review Letters actually recommend that you file the system on arXiv before submitting and then you give them the arXiv tracking cite. Like this http://arxiv.org/abs/1411.0815

Funny how the goalposts get moved from:

to:

I see Myhrvold occupying a spot similar to someone like the late David MacKay, who wrote about lots of topics, including renewable energy. Check out Myhrvold’s forays into climate science research. He has been peer-reviewed in this area and was smart about it because he co-wrote with Ken Caldeira, who is a climate science insider. In the asteroid area, he decided to go it alone and so is getting the almost predictable blowback.

[Response: No, the “blowback” is because he included nasty implications of malfeasance on the part of other scientists, and because he has failed to make some very basic corrections that have been pointed out to him. This isn’t a case of “rebuffed just because he’s an outsider” — despite how much he might want to play the “persecuted martyr.”]

The overall idea of what they are trying to do with estimating asteroids is straightforward. There is no mystery here. Inferring emissivity is probably the trickiest part, IMO. Otherwise its relatively intuitive deductive problem solving. Jonathan Gradie commented and he has quite a resume when it comes to asteroid research, so will defer to him. I find it odd that Gradie is dismissed with a “so far off base it isn’t even funny” blow-off.

I really commented on this particular story because of the contrast to what I am trying to do with understanding a mysterious phenomena such as QBO and ENSO. For QBO, you have Richard Lindzen claiming to explain how the mechanism works, yet he completely misses the precise correlation to lunar forcing periods. What a howler Lindzen made there with his 40+ year old theory!

Tamino replied:”My opinion: you are so far off base it isn’t even funny. “

After Jonathan Gradie wrote:”Myhrvold is correct that the NEOWISE algorithm has not used Kicrchoff’s law correctly in all cases. In particular is some loose trading of spectral emissivity and bolometric emissivity in their models. Myhrvold is correct that the NEOWISE mixing of spectral and bolometric emissivity is not good, especially when trying to separate those portions of reflected sunlight from thermal emission in bands 1 and 2. I agree with Myhrvold that the statistical analysis and model calibrations have some very odd aspects.”

These seem to be fairly specific arguments. They do not express agreement with Myhrvold in total – just specific criticisms Myhrvold has made.

And I would have to agree with WHUT; Asteroid 3253 Gradie indicates Dr. Gradie has more than passing knowledge of the subject.

The correct link for Asteroid 3253 Gradie is:

http://adsabs.harvard.edu/full/1987MPBu…14…37T

Interesting to find out where Myhrvold’s paper will eventually get published. It’s over 100 pages, and full of charts. Usually that long a paper goes into a review article (such as Reviews of Modern Physics) or a monograph. He may go the route of publishing as a book, and skip the conventional peer-review process of journal articles.

There is a big hole in peer-review with respect to books, where you can get someone like a Murry Salby publishing lengthy texts on climate science, where he can squirrel away all sorts of odd assertions amongst the conventional bits of knowledge.

Interesting responses to my comment. I’ve seen and been involved in worse, most commonly in professional meetings and review panels, so no offense taken. Myrhvold’s taking the fight into the public venue is a bit over the top, most of us would go drinking, in public, after meetings so our yelling and screaming would be taken as drunken banter by the regulars.

I hoped that a key issue, namely the use of bolometric emissivity instead of spectral emissivity in thermal models covering the 2-5 micron band where, as in the case of NEOs (but not as much for cooler objects at r >3 A.U.), combined thermal and reflected fluxes need to be disentangled would have been of interest. In climate science analogous thermal leakage occurs in radiative processes in the atmosphere. An ancillary concern into which I have not delved and I have no time at the moment to do so (I’ll leave it to my colleagues who are better positioned to sort it all out) is the odd comment by Myrhvold that the NEOcam model calibration database has model diameters for asteroids of known diameters equal to the “known” (observed) number. I have not verified this by looking at the NEOcam calibration models and results. When I present the goodness of fit of a model, I present “observed-calculated” results for the calibration objects, not the accepted observed value. Perhaps the table to which Myrhvold refers is for something else, such as a “final table of asteroid diameters” in which table entries for known diameters are legitimate. But, these entries are generally noted in the footnotes as observed (known) values not the model result values. Time will tell.

I do have a comment on, “The real test of models (including Myrhvold’s) is how well they agree with cases of known diameter (for which there are many asteroids).” I philosophically disagree with that statement, although there my be more implied by the author than I read. To me the real test of a model of some physical system is a combination of how well the model uses physics-based fundamentals, as opposed to a mathematical parameter-driven fit to the data, as well as the goodness of fit. A physics-based model has predictive power one can use with confidence. I get the feeling (based on the history of the business) that the NEOcam models are influenced somewhat – I do not know for sure – by the parameter-fit side. Now, I do not know if the NEOcam is “good enough” for the purposes of the NEOcam mission. If so, then Myrhvold’s dissertation, at least with respect to the NEOcam mission, is making a mountain out of a molehill. However, with respect to the larger picture of asteroid science, a better physics-based model is always welcome.

Finally, a personal comment. I’ve found this exercise, commenting on a topic I kinda’ understand, a useful calibration of comments on blogs. Some comments are reactionary and frankly off base. But as a reader, if you’re not familiar with the specific science you may not recognize these reactionary statements as such and be led to unwarranted conclusions. Best regards, JCG

[Response: Physicist does a lot of calculations about how to throw a football. Takes into account air resistence, its temperature and humidity dependence, the complex texture of the surface of a football. Then he puts a paper on ArXiv — together with a press release — about how Tom Brady and Peyton Manning are incompetent boobs, doesn’t agree that the real test is how well they connect with their receivers, and we shouldn’t have confidence in any quarterback whose technique doesn’t use proper physics-based fundamentals.

I quite agree that a better physics-based model is always welcome. But I’ll go with the guys who have 6 super bowl rings. Best regards.]

It is a sorry state of affairs when the phrase “US presidential hopefuls” can used offhandedly as a synonym for someone who is clueless or deceitful.

In my opinion the first statistical error is to call the slope of the OLS regression on 15 years of data a “trend” of the underlying time series, especially without mentioning the uncertainty.

Repeating their calculation, we find (for HadCRUT4.4 in the period 1998-2013) that the “trend” per decade is 0.05 +- 0.12, and the correlation (whatever measure is used ) close to 0. Mostly noise and just a small signal.

For GISS it is only a little bit better: 0.11 +- 0.11.

Physicists can apparently “see” reality just a little bit better than the rest of us, I guess. Or so Fyfe et. al. seems to assert.

UK Met Office has released the April 2016 anomaly.

Along with NOAA and JMA, this marks the 12th month in a row where each monthly anomaly has been the highest on record for the month.

http://www.metoffice.gov.uk/hadobs/hadcrut4/data/current/time_series/HadCRUT.4.4.0.0.monthly_ns_avg.txt

Thanks, Barry. I was just looking for that yesterday, and it wasn’t up then! You’re quick.

The fundamental numbers that concern me are the rates of carbon emissions. To date we’ve watched them continue to accelerate, when they should instead already be nose-diving.

http://insideclimatenews.org/news/19052016/global-co2-emissions-still-accelerating-noaa-greenhouse-gas-index

And now it seems to be causing positive feedback loops in the system:

http://www.theguardian.com/environment/2016/jun/01/alaska-wildfires-climate-change

And some of those aforementioned numbers:

http://archive.is/teWqp

We have a Serious Problem people…

And this:

http://inhabitat.com/arctic-temperatures-are-literally-off-the-charts/

I hope that everyone has their +3 vorpal swords at the ready, because there’s a mess of ecological trouble coming.

Alas, even if our “snicker-snacking” is entirely successful going forward, there isn’t any “galumphing back.” Too many losses already, and too much change “baked in.”

http://hubpages.com/politics/Climate-Change-How-Much-Time-Do-We-Have

Still, that Jabberwok *must* die.