Pursuant to the subject of step change and telling the difference from linear increase, a reader pointed to a post on Roger Pielke’s site which claims to prove a step change (rather than linear increase) in two time series, of warm and cold nights over South America from 1960 to 2000. There’s a good bit of hand-waving about visual inspection of graphs (which really amounts to “it sure looks like a step change”), but the essence of the “proof” comes from modelling the data as two different straight lines, one fit to data before the purported step change, the other fit to data after the purported step change. The stated conclusion is:

… the slopes before and after the change points are not statistically significant (P > 0.05) and thus not significantly different from zero. In each data series, therefore, μ1 ≠μ2 and β1 = β2 = 0, proving, beyond doubt, the presence of flat step changes in the two data series.

Permit me to doubt.

I tested the validity of such proof by generating some artificial data which were the sum of a linear trend and random (Gaussian white) noise, so we already know the right answer. Then I subjected the series to change-point detection. Then I fit the stated model (one straight line before the change point, another independent one after the change point). I then noted whether or not the slopes of both straight line segments failed statistical significance — which, it is suggested, is sufficient for “proving, beyond doubt, the presence of flat step changes.”

If it’s even “proof” in the statistical sense (let alone “beyond doubt”) then no more than 5% of such simulations should exhibit this behavior. And what was the result? Out of 1000 simulations, 580 (58%) met the stated “proof” criterion, in spite of the fact that they were all artificial data with a known, linear trend. More than half the time, the data indicated “proof, beyond doubt” of the wrong answer.

It’s not that the trend itself was too small to detect. In 998 out of 1000 cases (99.8%) the linear trend (for the entire data set) passed statistical significance.

The fact is that unless the signal-to-noise ratio is very high, the difference between linear trend and step change is damn hard to establish. Even when we know the trend is linear, the response to change-point detection will be quite strong (and will pass statistical significance with flying colors) and it’s highly likely (in the simulations, more than half the time) that the slopes of the linear fits to “before” and “after” segments can fail statistical significance.

In fact, in these simulations even if you compare the linear model (which is right) to the step-change model (which is wrong) using AIC, the wrong model had better AIC in 238 out of 1000 (about 24%) of cases.

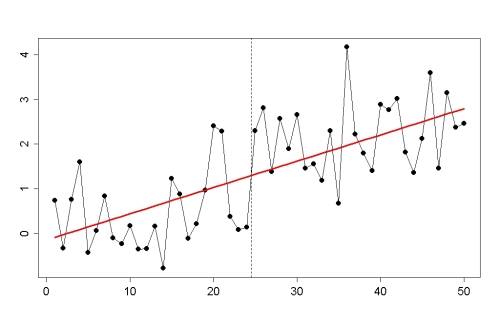

An example, which is not atypical, is the last of the 1000 simulated data sets. Here’s the data, with a linear trend line superimposed:

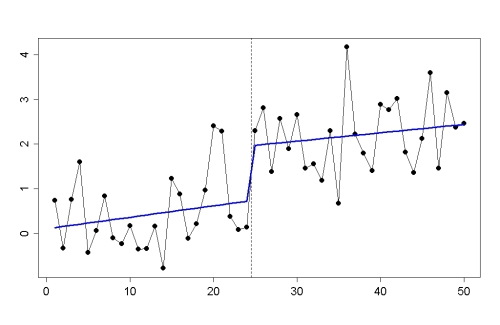

Here’s the step-change model, which not only has smaller residuals, it even (like 24% of the samples) has better AIC:

And here’s the two-linear-segments model, for which (like over half of the samples) neither of the linear segment slopes is statistically significant:

Simply put, what the idea fails to account for is that when a linear trend is present, if you pick the single moment which gives the strongest “step change” model (which is what change point detection does), you have in a sense “hand-picked” a moment which is most unfavorable for establishing significant trend for its subsections. It’s easy to see why someone who hasn’t thought the problem through closely enough would miss this, and be fooled into overestimating the significance of this test. Extrapolating to “proof, beyond doubt” is just a bridge too far.

Do the series of warm nights and cold nights over South America follow linear increase or step change? I don’t know, I haven’t seen that data. If slopes before and after a purported step change both fail statistical significance, is that “proof, beyond doubt” of a step change, or even disproof of a linear trend? No.

Once again very enlightening. Properly used stats make a powerful tool.

If steps disprove global warming, then where are the down steps? Perhaps if I knew more statistics then the difference between linear trend plus normal variation and steps would excite me. Either way, it is warming on what should be slow cooling according natural cycles.

So in two years we will have solar max, and sometime we will have an El Nino so another big step up.

That’s the remarkable part, isn’t it? These oscillations and steps just always seem to mimic an actual trend somehow.

?I was just reading Science’s retraction of Lombardi et al (2009) (XMRV paper). The last line of the letter reads:

“We regret the time and resources that the scientific community has devoted to unsuccessful attempts to replicate these results.”

Wouldn’t it be nice to get a similar statement from Dr. Pielke Sr. concerning his beyond a doubt certainty?

Note that this retraction was not from the authors, so I rather doubt we will see one from Pielke Sr. Now a journal retracting some of his “work” with these words – would be delicious.

I wonder how hard the author had to work to find all those citations for the idea that it’s good statistics to look at data before you decide which statistical test to apply to that very same data. I suspect (hope) that the cited papers would warn folks taking this approach to repeat their experiments… a luxury not available in this case.

Note to readers: that rebuttal on Pielke’s site is by the author of the original “yay! a step” comment, Reynold Stone. The fact that Pielke includes the author attribution in the title makes if confusion (the the text does say “we” to refer to the original RS comment). I do love the line about visual inspection.

But you cheated by testing data that you already knew the form of! You also thought about it as well as applying statistical tests. I’m pretty sure that thinking thing is not allowed.

You really should have to fight with one arm behind your back…

BTW, this would make a nice exercise for students doing stats at uni.

It would indeed, I set a very similar one for my students this year (detecting a breakpoint in surface temperatures by Bayesian and frequentist procedures). Might use this one next year!

It would be interesting to see how the Bayes factor between a linear trend and the step change model worked out.

Punctuated equilibrium anyone? Whether it’s in steps or a straightline or combination of both it is still going up.

Hmm, Tamino, is the time of the step one of the parameters in the step model? Does BIC perform better in this case?

[Response: Yes, and yes.]

Excellent explanation of the implicit cherry picking. We discussed issues around statistical significance with Prof. Pielke on SkS (relating to the flattening of the global surface temperature trends post 2000). He stated repeatedly that it was obvious that there had been a change, but would not be drawn on a discussion of the statistics (i.e. to demonstrated statistically significant evidence for his hypothesis). Not what you would expect from a scientist that works regularly with data.

I am almost tempted to ask how many regions he looked at before he found a region that he wanted to talk about.

Oo, give in to the temptation Dikran Marsupial!

Ask, ask!!

I can resist anything except temptation… “how many regions did he look at before he found a region that he wanted to talk about, that’s what I want to know!!!!” ;o)

This post is a poster child for why monte-carlo simulations are wonderful (since that’s essentially what you’ve done).

One of my favorite examples of step vs. linear occurs if you look at the warming trend from 1977-1987, and then again from 1987-97. Each ten-year interval seems to have no statistically significant trend. Yet the 20 year interval has a significant trend–it kind of puts paid to the “no warming since 1998…oh wait, I mean 2000, oh…damn” arguments.

Will people ever realize that a non-significant result in statistics is not proof that a pattern does not exist? It is probably the most common error of interpretation I see.

Nope. It’s pretty much impossible to convince folks who don’t think about it often (or are unwilling to admit error) that: “If A is true, B is likely false” is not equivalent to “If A is false, then B is likely true.”

It’s hard enough to convince folks that “If A, then B” is not equivalent to “If B, then A.” Making B itself complex goes one step further.

I just had to deal with this in explaining to someone how 3 different data sets differed… (1) Set A was distinguishable from B and C. (2) Sets B and C were indistinguishable (3) Set A was distinguishable from B, but not C. The person I was talking with could not believe that 3 was possible given 1 and 2.

Well, “Set A was distinguishable from B and C” is ambiguous. It could mean “Set A was distinguishable from set B and distinguishable from set C,” or it could mean “Set A was distinguishable from B and C together.”

The first reading contradicts your proposition 3. . . I don’t know if that has anything to do with the discussion you had, of course.

True. Meant that to be “A is probably not B. A may or may not be C. B may or may not be C.”

I think I got it–this is awkward in words, isn’t it? And generally, I prefer them. . .

Not at Watts’ they won’t.

It seems like this is a case where it would be particularly useful to give confidence limits rather than p values, or even plot the envelope of possible slopes for each line segment. This might drive the point home that while the slopes of the “flat” segments are not statistically different from zero, neither are they statistically different from a warming trend (even trends much greater than the true value, in many cases). It might, depending on whom one is talking to.

See also Tisdale’s claims of ‘step-change’ behavior from the ENSO over the last 30 years. His ‘flat’ time series between the steps are on the order of 4, 9, and 12 years respectively – far far too short for any statistical trend significance.

Folks just can’t seem to step away from the ‘eyecrometer’…

This whole step change reminded me to this excellent graph by Skeptical Science:

Thanks Tamino–you often show really nice demonstrations of the power of simulated data to evaluate statistical tests.

Tamino- I don’t know the right place to post this question, but I noticed a paper in Science (Dec 16, 2011 pp 1518) on an alternative to Pearson’s correlation coefficient for large data sets and without presumption of normally distributed errors. It also was useful for seeing non-conventional functions such a circle of points in 2d space. I noticed one figure (2) that featured hockey sticks ( B, C and F) Wondering if this test might be useful in the never ending wars over Michael Mann’s and other’s work on the paleoclimate record.

[Response: I saw that too, and it seemed like a fascinating approach. It’s on my “to do list” to find out more about it.]

You’ll love this: “the long warming trend shown by the data is not really consistent with the model predictions, even after the data’s been taken to a Cuban prison by Tamino and tortured till it confessed”

If you don’t have facts or logic, flights of rhetoric must suffice.

I presume that by “a Cuban prison” they mean Guantanamo Bay.

A cuban prison, eh? Nice double shot – associate the name with strange foreign communist dictatorships and torture.

Given a monotonic forcing and a frictive system step changes in a single direction are going to dominate. The simplest examples are moving a refrigerator or earthquakes. In such systems the starting friction exceeds that when the system is moving. In both cases the system moves in jumps and it is not unreasonable to think climate will respond in the same way on all scales.

Which model is correct is actually a question that can only be discussed in physical terms not statistical as long as the jumps are small

Besides, they ain’t jumps they are jerks.

Yes, they are indeed jerks.

Eli,

Yes, “stiction”, or static friction, well known in engineering (and everyday life). Not sure where the friction applies in temperature changes, though?

“Stiction” in the surface temperature trend comes readily from changes in ocean currents. The ocean is the great heat reservoir as you recall. You may also recall ENSO. QED

I believe Eli refers to the possibility of systems nearing a tipping point to react less to a forcing, until the tipping point is crossed and the system lurches to a new area of relative stability.

For example

Slowing down as an early warning signal for abrupt climate change

http://www.pnas.org/content/105/38/14308.short

Predicting climate tipping as a noisy bifurcation: a review

http://eprints.port.ac.uk/3527/

Not sure that the presence or absence of steps proves or disproves anything about the effect our emissions are having on the planet. An upward trending curve is still an upward trending curve whether it is depicted as a smooth curve or a series of steps, especially where the number of steps approaches infinite.

Step right up and you will see,

The Cirque du Soily coterie,

“The Greatest show on Planet Earth”,

A real knee-slapper, for what it’s worth…

From “Cirque du Soily”

“Soleil”–or is the spelling part of a joke I’m not getting?

Well, the 4th stanza is as follows

“In the Center Ring’s the Main Event,

Way up near the top of the tent,

Inhoax uses a Big Mac and Tire,

To balance himself upon the wire”

Does that answer your question?

“Inhoax. . . Mac and Tire. . .” I suppose it does.

Mr. Step Change misses the point that he has created a bar chart and the bar chart shows and upward trend. Economists use bar charts all the time to show trends. Oy!

In a real-life planetary-wide physical system, like, say, the Earth climate. what kind of events would cause “subtle” (i.e. hardly distinguishable from linear) step changes in measurements ?

I can think of one, changing the measurement method, or the measurement tools.

What other kind of event would possibly have that (near-immediate) effect on the underlying temperatures ?

Well, biological responses, for one.

Consider an imaginary dominant plant in a particular region: able to thrive up to (say, arbitrarily) 25C, and sensitive to CO2 fertilization. As CO2 increases, its population increases, creating, let us say for argument’s sake, a dense growth characterized by high levels of evapotranspiration which have a significant negative (quasi-)radiative forcing. This slows regional warming, and the region therefore tends to lag the global warming trend. In fact, our imaginary plant creates sort of a negative feedback by proxy.

Then the general warming forces the region over the 25 C threshold. The plants don’t thrive anymore; in fact, they begin, slowly at first, to die off. Evapotranspiration sags; the region becomes a CO2 source rather than a sink (though unless it’s a very large region indeed, I wouldn’t think this would affect the global level much.) Maybe albedo is affected; certainly the soil is more exposed to drying influences, and the whole hydrology is affected. The result is a region warming faster than the globe as a whole.

Voila–‘stiction.’ Imaginary, as I said, and probably much simpler than anything apt to happen in reality. But you asked what could ‘possibly’ induce near-linear steps in temperature, and I had this idea. . .

Thank you.

It occurs to me that most possible events that could possibly induce an almost stepwise climate change would be obvious and catastrophic, like, say, a volcanic eruption or sudden desertification of a wide area, although, in practical terms, their effects would be gradual, but, presumably, rapid enough to appear as sudden once blurrwed by the noise.

A major change in ocean circulation could lead to more or less phytoplankton productivity, changing ocean albedo over a large area instantly.

In a similar vein, such a change could alter rainfall patterns and quickly change grassland to desert or vice versa.

IF (BIG IF) the effect of that change was to stabilise the change – to the extend that it was stable relative to normal variation then you’d see a spontaneous ‘Step’ in the data.

That’s the killer; you’d have to change a pretty big area of the surface, and it has to be variability-proof. You’d notice that kind of thing..

I am surprised that the Chow test wasn’t used to test for the breakpoint in the study. It’s an established method and simpler than the other tests, especially when the breakpoint can be roughly identified from a visual representation of the data. Any idea why this wasn’t employed? (Using the F-stat of the Chow test to see if the slopes of the two lines were similar would also have passed your tests, Tamino)

It’s been done.

However, doing one doesn’t mean that it was appropriate to do so. Re-read this post of Tamino’s and the preceding one, and also the Skeptical Science take on the subject, and you might understand why.

Scott,

It appears that to apply the Chow test properly, you need to apply it to a wide range of viewpoints, to pick out the best place for the break. I suspect Pielke didn’t use it because he knew damn well it wouldn’t support the breaks he wanted.

Wide range of BREAK points! Jesus, I’m tired…

Agh. I started posting again at WTFUWT after two years of resisting temptation. Teh stupid was just too palpable to resist responding to. Though, I suspect this foray won’t last more than 24hrs. Dog help me.

Steve, is that a picture of you beating your head against the wall?

Might as well be. I even got told off by the Potty Peer himself. He said my post was ‘angry’. I suppose it was. I’m angry that the likes of paid time wasters like Monckton still have an audience for their pseudo-scientific claptrap.

The important point is that the forcing is monotonic. The nature of the response is second order.

But that would violate Watt’s Law, wouldn’t it? “A linear forcing always results in a linear response.” ;-)

… and the fake skeptic response is ginantonic.

Bob Tisdale has posted a new repsonse to the Foster & Rahmstorf 2011 paper, in which he seems to claim that the filtering process for ENSO doesn’t take into account the “residual heat” from a strong El Nino, that, through an oceanic Rossby wave, travels back toward the western Pacific, above the equator and out of the standard ENSO measurement, such that, the linear rise found in FR 2011 does not account for this “residual” El Nino heat, and it is therefore included in the linear rise attributed to anthropogenic warming. In looking at the FR 2011 paper once more, it seems this “residual” El Nino heat may in fact have been accounted for in the best fit lag time process used, but I would greatly appreciate a more explicit response to this.

On a wider issue of course, Bob T. has for year been pointing to the “step wise” increase from El Ninos as being the primary cause of the warming since the mid-70’s, with the implicit assumption that at some point a “step down” would have to occur. If the FR 2011 paper is essentially correct, of course that “step down” isn’t coming.

Why is B0b Tisdale’s opinion relevant to any science except abnormal psychology?

Don’t disagree, but his site is one of the few places one can find recent ARGO graphs. So I go there to look at the pictures!

Do be more specific, R. Gates. Tisdale re-posts a expanded part of his original response to FR2011 & does this because he made such a pig’s ear of the bits he now leaves out.

He now asserts that FR2011 is wrong to use a linear relationship between global mean temperature & ENSO index becasue ENSO is more complex than that. From this point in his argument he is not actually examining FR2011 or its methods but creating his own pseudo-theory (complete with “steps”). Because Tisdale is happy with the results of his pseudo-theory, he concludes FR2011 is wrong. (It’s difficult to know just why.) I assume he also concludes that the Laws of Thermodynamics need scrapping because that is also strongly implied by his wonderful pseudo-theory.

And before folk jump in and slag the poor bloke off, I must make clear that I really appreciate Tisdale coz he makes me laugh.

^ post of the week?

I read the paper on Pielke’s web site and think it is probably correct in its identification of a step change. You’re right that that particular statement “proving, beyond doubt”, with non-significant trends around a step is a silly one. The visual inspection stuff is also quite silly.

But the tests that Stone ran are quite comprehensive, and if the Rodionov test with white noise removed was run (he doesn’t say), then the results could be regarded as pretty solid.

But the paper is also wrong about what it suggests (we argue that the case for anthropogenic global warming/climate change is unsupported by the Vincent et al. (2005) study). The lack of trending behaviour in a variable doesn’t say anything about AGW. It actually casts doubt on the linear signal-to-noise model of climate change. This model suggests that variability around the mean trend is natural. However, if the anthropogenic signal itself is episodic (coming in jerks as Eli suggests upthread), and is also linked with natural variability then Stanley, we have a fine mess indeed.

And if this is the case why does it matter? It matters because extremes can change quite rapidly, which is a matter of concern for adaptation and risk management in general. I have a paper in press at JGR which describes this for SE Australia and presented a poster on climate shifts at the recent AGU meeting. What it does to extreme temperatures in SE Australia is described here

Roger Jones,

Tamino did something on tropical cyclones in the Atlantic a while back that may be of interest to you. It identified a stepwise series of regimes indicative of nonlinear dynamics. The step-wise behavior has reminded me in the past of chaotic behavior involved in water drops from a faucet as one gradually increases the water flow.

Please see:

I am likewise reminded of the following passage:

Timothy Chase – thanks for that. The latter quote is especially useful – I remember the paper but not the passage. Totally agree with the second alternative. Have been writing up results for NZ temperature today and that’s what it seems to show. In fact, that’s the case for all four regions I’ve looked at in detail.

Perhaps you meant “red noise removed”?

since that is actually what Rodionov suggests

—

from A SEQUENTIAL METHOD FOR DETECTING REGIME SHIFTS

IN THE MEAN AND VARIANCE

Sergei N. Rodionov

And Horatio is not a statistician, so he is not familiar with the specific statistical meaning of the terminology “pretty solid” …

~@:>

Has many people have pointed out, earth climate being a non linear system you do expect it jump with step. The 2007 collapse of the artic ice cover is a good example of such jump. Over the whole planet, such behaviour will average out somewhat , but some jump might still be detectable. Claiming that jump disprove global warming indicate that they did not have a clue about the fundamental physics of the phenomenon. Off course, non linearity become handy when claiming that since the climate if non linear by nature, we can predict the future of climate.

Roger Jones – I am also reminded that there should be differences in terms of how climate forcings project onto the different modes of natural variability. In particular, the following passage regarding radiative forcing efficacy:

… although I somewhat doubt that this will make much of a difference on a local or regional scale. But perhaps the papers that are referenced will be of some value.

And on a longer time scale

Shuman 2012: Patterns, processes, and impacts of abrupt climate change in a warm world: the past 11,700 years

Abstract

Abrupt environmental changes punctuated the warm Holocene epoch (the past ∼11,700 years), and studies of these episodes can provide insight into the dynamics that produce rapid climate changes, as well as their ecologic, hydrologic, and geomorphic impacts. This review considers the processes that generated warm world abrupt changes and their landscape and resource effects, including nonlinear climate system interactions, as well as the possibility that large climate variability can linearly produce apparent ‘state shifts.’ Representative examples of Holocene changes illustrate processes that could produce future changes, including (1) rapid changes in ice sheets, such as by ca 8200 years before AD 1950, (2) shifts in the behavior of the El Nino-Southern Oscillation (e.g., at ca 5600 years before AD 1950) and Atlantic Meridional Overturning Circulation (e.g., at ca 2700 years before AD 1950), and (3) land–atmosphere feedbacks, such as were possible in North Africa in the mid-Holocene. These case examples, drawn primarily from the Northern Hemisphere, also reveal the dynamics that generate the types of climate change impacts that would be particularly evident to individuals and societies, such as rapid tree species declines (observed to have taken place within as little time as 6–40 years) and persistent shifts in the regional availability of water. Holocene changes also demonstrate that even progressive climate change can produce important abrupt impacts; that increased rates of background climate forcing may increase the frequency of abrupt responses; and that impacts may well depend upon the particular sequence of changes. WIREs Clim Change 2012 doi: 10.1002/wcc.152

ht The AGW Observer

> ARGO graphs

http://www.usgodae.org/argo/argo.html

Is Tisdale making his own graphs?

Hey, y’all–I just published a book (e-book, really, about 40 pp) which will be available through amazon, Kobo, Google Editions, and a couple of others I can’t remember offhand. The title is “The Greenhouse Effect–What It Is, How It Works.” $2.99 if anyone’s interested.

Some step changes are easily testable. A good one is the 451 F test in a pottery kiln. You stick a piece of newspaper in the little spy hole after you’ve shut off the kiln and if it catches on fire, the atmosphere inside is still above 451 F. If dry newspaper acted linearly to heat, we’d be in trouble.

— Horatio Algeranon’s rendering of the classic Led Zeppelin tune

“Stairway to Heaven”

— Horatio Algeranon’s rendition of the classic by Led Zeppelin

There’s a skeptic who’s sure, temp that goes up is good

And he claims it’s a stairway to heaven

When he gets there he “knows”, if the future’s foreclosed

With a dollar he can get what he came for

Ooh, ooh, and he claims it’s a stairway to heaven

There’s a graph on his blog but he wants to be sure

‘Cause he knows sometimes graphs have two meanings

In a tree by Yamal, there’s a proxy that sings

Somehow all of its temps are misgiven

Ooh, it makes him blunder

Ooh, it makes him blunder

There’s a feeling he gets when he looks at the BEST

And his spirit is crying for leaving

On his blog he has blown rings of smoke through the trees

And confusion for those who stand lurking

Ooh, it makes him blunder

Ooh, it really makes him blunder

And it’s whispered by Willie Soon if we all call the tune

Then the Inhofes will lead us to Reason

And a pay day will dawn for those who stand long

And the board-rooms will echo with laughter

If there’s some chaos in your climate, don’t be alarmed now

It’s just a spring clean for the Maldives

Yes, there are two paths you can go by, but in the long run

There’s no need to change the road you’re on

And it makes him blunder

Your blog is humming and it won’t go, in case you don’t know

James Inhofe’s calling you to join him.

Dear Tony, can you hear the windbag blow and did you know

Your stairway lies on the global temp?

And as we wind on down the road

Our shadows taller than our soul.

There walks a scientist we all know

Who shines white light and wants to show

That he ain’t in it for the gold.

And if you listen very hard

The tune will come to you at last.

When all are one and one is all

To be a rock and not a troll.

But he claims it’s a stairway to heaven…

Very amusing!

David Stockwell responds.